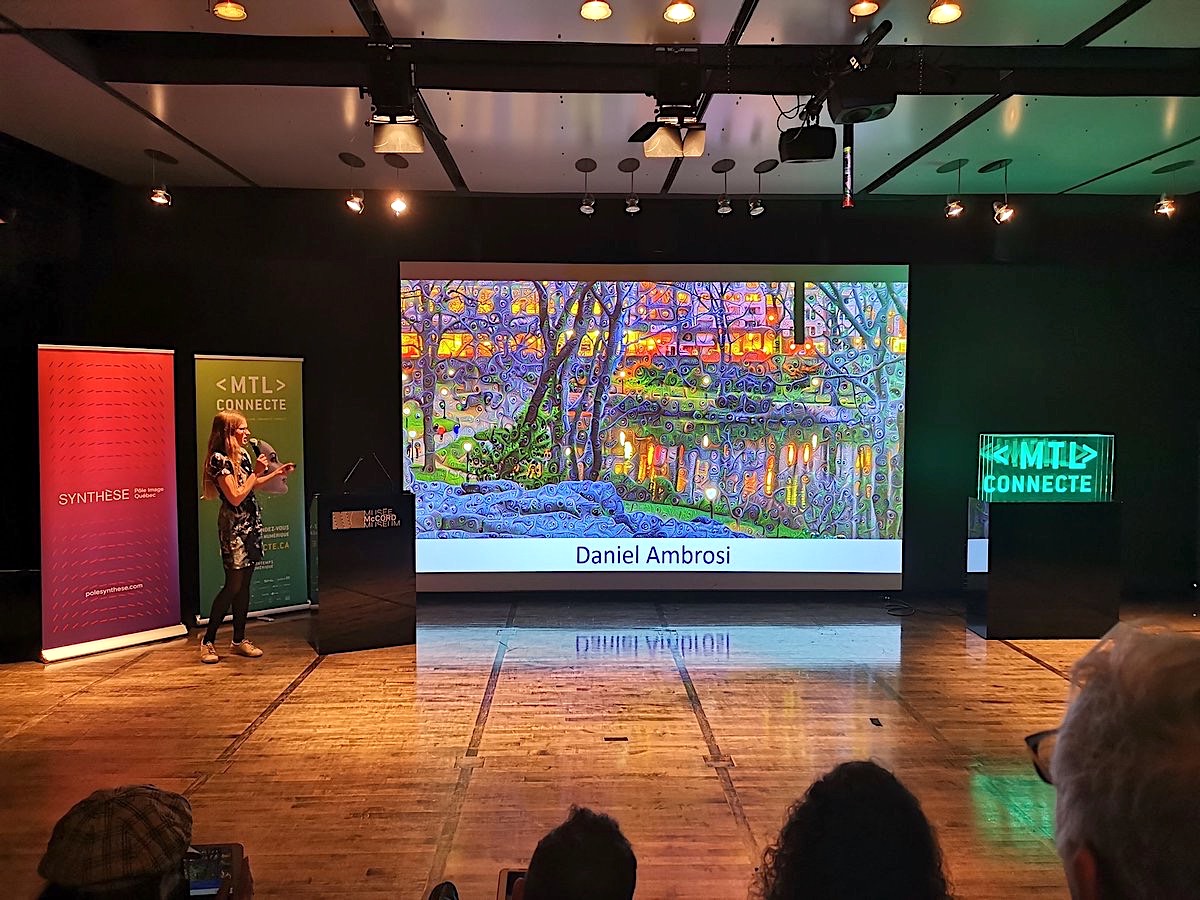

Dreaming Differently

Daniel Ambrosi has been exploring groundbreaking methods of visual presentation since graduating from Cornell University with a Masters degree in 3D graphics and architecture. In 2011, he devised a unique form of computational photography that generates extremely high-resolution immersive vibrant images. In 2016 he created his first images in the Dreamscapes series, building upon his previous experiments by adding a powerful new graphics tool, a modified version of “DeepDream” — a unique computer vision program evolved from Google engineers’ desire to visualize the inner workings of Deep Learning artificial intelligence models.

CONCEPTION

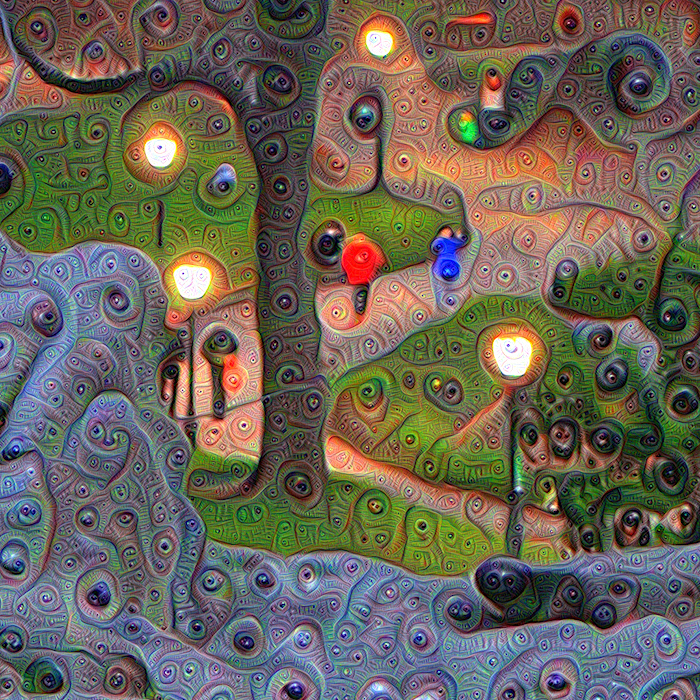

In July 2015, Google released an open source software package called “DeepDream,” which quickly became a viral sensation. When applied to images, photographic or otherwise, this artificial intelligence (AI) program imbued the originals with complex, even hallucinatory, patterns and textures. While a few people were able to generate intriguing results, most used the software as a novelty turning their snapshots into psychedelic nightmares. Nonetheless, I sensed an opportunity to use the software with more subtlety in hopes of bringing a sophisticated expressiveness to the giant landscape images I had been creating over the previous four years. I produced a series of low-resolution tests and achieved promising results that were well received. These were shared internally at Google to much acclaim; one individual on the DeepDream team remarked, “I love how this is being used as an artistic tool beyond just a weird curiosity.”

ENGINEERING

Fortunately, a few weeks later I was able to enlist two brilliant engineers, Joseph Smarr (Google) and Chris Lamb (NVIDIA), to try modifying the DeepDream source code for my purposes. It took them over four months of sporadic effort on nights and weekends to achieve “liftoff” with one of my full resolution scenes. In late January 2016, they handed off their modified DeepDream code to me and my real work began. I am the only person with access to this code and, as far as I know, no one else has undertaken a similar engineering effort and/or is “dreaming” on images at this scale.

INTENT

I am drawn to “special places,” unique locations in our world of such breathtaking beauty and grandeur that the scene goes beyond a mere visual perception and becomes a visceral experience. When that happens I invariably find myself waxing philosophical, pondering the truth behind what I’m seeing and questioning reality itself. Traditional photography has failed to capture my experience in a way that enables me to share with others this powerful connection that is quickly forged between eyes, body, and mind. Being an analytical and tenacious person equipped with a strong background in design, art history, and computer graphics, I experienced two breakthroughs while trying to “deliver” this personal experience. The first breakthrough was my development of the “XYZ photography” method, a blending of panoramic and high dynamic range techniques that results in unusually high-resolution, immersive, and vibrant images. Judging from the most common response, “It feels like I can step right into this scene,” these images appear to reach people both visually and viscerally.

The second breakthrough came in 2016 through DeepDream code modified by Google engineers. Every square inch of my mural-sized landscape images were transformed with wholly unexpected form and content that is only revealed upon close-up viewing. This combination of computational photography (my XYZ method) and artificial intelligence (my modified DeepDream code) placed in service of this specific artistic intent is both unique and original.

PROCESS

DeepDream, especially as modified by my engineering team, is incredibly powerful software with an enormous range of options from which to choose the desired dreaming style and characteristics. The key was hosting their software on a monster cloud-based compute server utilizing four separate graphics processing units (GPU) — a supercomputer in the sky, if you will. One benefit of this approach is that it enables me to run four different experiments at a time, one on each GPU. This made it fairly quick and straightforward for me to exhaustively catalog the “macro” style of all 84 layers of DeepDream’s neural network, and to fully understand the effects of tweaking the four parameter settings that can be applied to each of these styles.

For a clear understanding of how Ambrosi creates his images click here.

UNIQUE EXECUTION

The unique setup and approach I’ve taken to using DeepDream has resulted in compelling large format illuminated works of great impact and stunning detail. I’ve witnessed an amazing degree of crossover appeal, from adults to children, from security guards to CEOs, and from sophisticated art curators to laypeople — everyone seems to be captivated and fascinated by my Dreamscapes. I believe part of this appeal is due to the decision I made to rely on LED-backlit tension fabric structures to project my artistic intent, and the research I’ve undertaken to find the highest quality supplier. I haven’t seen any other DeepDream artists utilizing this medium, and if they choose to, they will learn that the providers of these systems are not all equal

SYNERGY

As I reflect on this project, and attempt to elucidate the ways in which my Dreamscapes differ from other applications of DeepDream, a guiding principle that comes to mind is my intention to actively and deeply collaborate with an artificial intelligence. While I hold no illusions that this intelligence is sentient, unlike others who may have a passing interest in seeing what an off-the-shelf version of DeepDream can do to their images, I am engaged in a relationship with this intelligence that is pushing each of us to develop and mature. And while the efforts of my ingenious engineering colleagues have granted DeepDream superpowers, so has this modified version of open source software unlocked a superpower for me in that I can now create compelling works of art with a complexity and richness that I could never execute fully on my own. Interestingly, accepting this superpower has required giving up a degree of control in that I can’t really tell the software exactly what to do and, in fact, I honestly don’t even fully understand how or why it’s doing what it’s doing. This is a bargain I believe many of us will have to make in the future of our work or even daily life with the rapid advancement of artificial intelligence and deep learning systems. But to me this is an optimistic story because there is no sense in which the computer is trying to replace me, thwart my intentions, or suppress my vision. After all, it has no innate desire to create art, nor any ability to discern which of the parameter settings are most aesthetically pleasing to other humans. It’s just a tool, albeit a very powerful tool that is somewhat beyond our comprehension. Ultimately, however, I still make the decisions as to how to steer it and what to keep or discard.

My images are my attempt to remind myself (and others) that we are all actively participating in a shared waking dream. Science shows us that our limited senses perceive a tiny fraction of the phenomena that comprise our world. No doubt, there is much more going on than meets the eye.

“The underlying focus of my work is to reverse engineer the psychology behind the human experience of special places. What I mean by ‘special places’ are precise locations in our world where something very powerful happens; namely, a reaction that goes beyond the visual to also encompass a visceral and cognitive response.”

— Daniel Ambrosi